There’s a big controversy right now about Cambridge Analytica and how they influenced the US presidential election and the Brexit referendum. Some even claiming they “hijacked” or “stole” the result.

Me? I’m not buying it.

While it looks like they broke Facebook’s data rules,

(A) Many of the things they’ve been accused of didn’t happen. They couldn’t have happened. They’re literally not possible within Facebook.

(B) And everything else they’ve been accused of, you could do without breaking Facebook’s rules. All you need is a big enough budget, big enough data set, and smart enough marketers.

(C) I’m not sure that CA’s approach is particularly effective.

Let me cover these one-by-one …

What didn’t happen

In a recent interview, Hillary Clinton said:

“If they were getting advice from, let’s say, Cambridge Analytica or someone else about, ‘OK, here are the 12 voters in this town in Wisconsin — that’s whose Facebook pages you need to be on to send these messages,’ that indeed would be very disturbing.”

Yeah, it would be. Except it doesn’t happen. Facebook doesn’t let you target 12 people. And, frankly, if anyone is targeting 12 people with a political ad, they’re idiots. Complete waste of time.

And, if they were that important, you’d be better off sending them a letter, or visiting them personally.

So that’s my first point: We’re hearing a lot of claims that simply aren’t true. And, more specifically, the more impressive the claim, the less likely it is to be true.

And that’s because …

What did happen was fairly mundane

Facebook advertising is about layering audiences so you end up with a highly-targeted message to the best possible prospects.

Some of this involves going after obvious groups. Some of it is seeing connections others wouldn’t see.

Example: a couple of days ago, Justin Brooke, a well-known Facebook ad expert, mentioned he’d targeted people interested in Bigfoot and UFOs when he was promoting a Bitcoin webinar.

Now, as someone who worked in finance, I’d say that says a lot about Bitcoin. But it also says a lot about the hidden psychographic connections you can exploit within Facebook.

So, when I read that Cambridge Analytica had data on 50 million Americans that rated them on the “big 5 characteristics” – openness, conscientiousness, extroversion, agreeableness and neuroticism – I thought, so what?

First, when it comes to understanding people, it’s a pretty blunt tool – as this Bloomberg article suggests:

Second, if I wanted to target people based on a combination of these qualities, I’d just need to build a list of people who had that combination and then use Facebook’s “similar audiences” option.

Time consuming? Sure. Rocket science? Not so much.

Third, there are so many ways to get a better understanding of Facebook users – all available within Facebook’s interface.

So, until I hear CA did something I couldn’t figure out myself, I’m not attaching any special importance to them.

Especially given …

Cambridge Analytica aren’t particularly successful

Did you know that, before they worked for Trump, CA worked for Ted Cruz?

They were hired to help him secure the Republican Nomination. How did that work out? He got spanked by Donald Trump, who won the contest by a huge margin.

CA were also allegedly helping the Tories before the 2017 election:

How did that go? They lost their majority, despite running against a Labour leader the media told us was unelectable.

So, maybe the Cambridge Analytica salesmen present their approach as a powerful tool. And maybe the media wants to scare us with tales of how their targeting swings elections … but results suggest otherwise.

They win some, they lose some … same as any other political ad agency.

So, if there’s not much to it, why all the hysteria?

I think there are three things driving this:

#1: People with a political agenda – both politicians and media outlets. They’re jumping all over this story because it suits their narrative that Trump/Brexit couldn’t be legitimate.

#2: Journalists have zero hands-on experience of Facebook advertising, so they can’t tell the difference between reality and spin.

#3: Real concerns about online privacy. This is why, when the story broke, I told people this is a Facebook story, rather than a Cambridge Analytica story.

To me, the big story here is that people could sign up to an app, and by signing up, would be giving up personal information about their friends.

Think about it: If you and I were Facebook friends and I signed up to the app, the app would have the right to access all your Facebook data. They wouldn’t have your permission, but they could do it anyway.

Morally, that’s just wrong. And, although Facebook has changed their policies so that can’t happen, it’s hard to imagine why they ever thought it was OK.

They now have to explain themselves. And, in my opinion, deservedly so.

The ethics of using people’s personal information to target ads

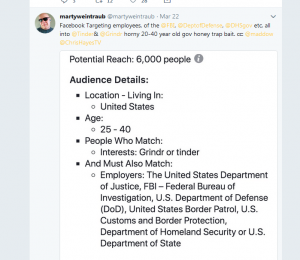

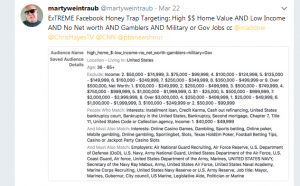

I want to show you a couple of recent tweets by Marty Weintraub – one of the best creative thinkers when it comes to targeting Facebook ads…

This one is, “Targeting employees, of the @FBI, @DeptofDefense, @DHSgov etc. all into @Tinder& @Grindr horny 20-40 year old gov honey trap bait”

And this one is, “ExTREME Facebook Honey Trap Targeting: High $$ Home Value AND Low Income AND No Net worth AND Gamblers AND Military or Gov Jobs”

Marty’s showing just the level of detail – much of it from 3rd parties (such as Experian) that’s available to advertisers in the USA. (Far more than is available here.)

And, as he shows, it could be used for very shady purposes.

Something about this feels wrong.

Facebook must agree because they just announced they’ll phase out these 3rd party data segments. A PR exercise, no doubt. With the media asking questions, they clearly felt they couldn’t publicly defend letting advertisers target this data.

It’s a step in the right direction, but you should still assume that anything you do on Facebook is public – and that skilled advertisers will continue to be able to analyse your activity and know a lot about you.

Because I don’t see any way they can phase that out without killing their business model.

Steve